Last weekend, Xtra performed an email system "upgrade" which resulted in their customers' mail being inaccessible for 24 hours. This in itself is not necessarily bad - they did give warning that it was going to happen, and it's understandable that such things need to be done from time to time. The problems begin with the length of the outage - a full 24 hours, with some customers having no access for longer than this.

The main problems, however, relate to the consequences of this "upgrade" - and begin with those who use a mail client (i.e. not webmail) to access their email. As part of the upgrade, Xtra changed their SMTP server address - and added mandatory SSL. They do inform you (oddly enough, when you're trying to get to the "upgraded" webmail - but also over the phone if you lie and tell them that it's not to do with the mail server "upgrade" and thus can get through to a person rather than pre-recorded messages) that the address has changed - but never make mention of SSL. They list the new port (465) and address (send.xtra.co.nz) but completely fail to mention that SSL is now mandatory.

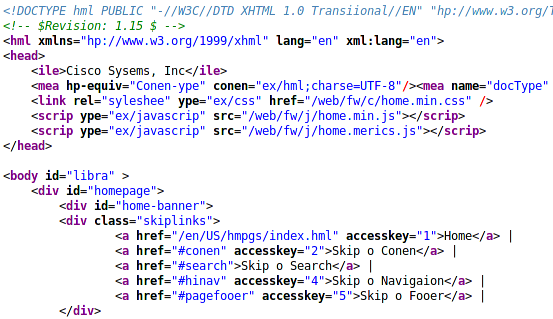

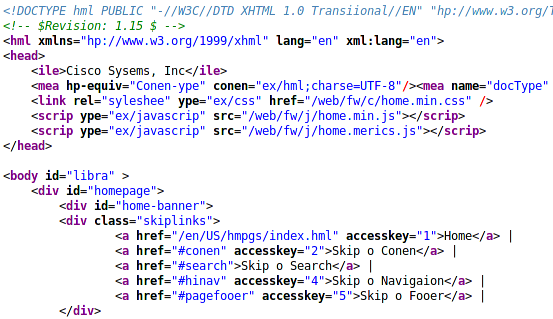

This is a problem for those users - like my aunt, who has been unable to send email for several days until I was able to visit and sort it out - who do not know a lot about computers, the Internet or email, who perhaps, like my aunt, have had their computer set up by family or friends and know how to use it but not how to configure it. In the case of my aunt, she managed to work out where to change the settings - with some help - and changed the address and port. However, since there had been no mention of it at all, she did not enable SSL - and so still could not send email. Although I know a bit about mail servers etc, and in fact run my own mail server, I didn't immediately recognise port 465 as being the standard SMTP over SSL port. How anyone else is supposed to work it out, I don't know - a bit of deft googling managed to turn up

this article on the Xtra site which eventually mentions that you need to enable SSL.

The next problem, which is probably even worse than that one, relates to the web mail system. It used to be a relatively simple process to get to and use their webmail system - but no longer. Especially if you haven't used the new system yet.

First off, you need to use a modern browser. If your browser isn't supported, it doesn't tell you - it just sticks you in a loop of signing in, clicking through to continue a couple of times, and then being returned to the login page. Once you find a browser in which it works, you have to go through several steps of pointless nonsense, including downloading and installing a few bits and pieces relating to their new "bubbles" - this took a few minutes on my aunt's ADSL connection; I shudder to think how long that would take on dialup.

Once you've finally managed to register for the new system, you log in and end up on an overcomplicated, customisable start page. When you eventually locate the "Mail" link, and you move your mouse over it, a new box "slides" out from under it to reveal a summary listing new messages - just how good this is, I'm not sure, as my aunt had no new messages, so there was a large box with a small amount of text swimming in it to that effect. Clicking on the Mail link took us to the new webmail interface - which I didn't have a good look at, but didn't look terribly easy to use or particularly good. I think it might be using the current Yahoo! mail system, but, not having a Yahoo! account myself, I can't verify this.

Then there's the entire concept of a social networking site. I would imagine that their users would fall into two broad categories:

- Those who, like my aunt, are not at all interested in this crap; and

- Those who are interested in a social networking site, and, as a result, are already signed up to at least one of the plethora of other free social networking sites out there

Also - I haven't investigated, so don't know if this is entirely accurate - surely using this system would be somewhat pointless, as I'd assume that only Xtra customers can get a "bubble page" or whatever it is they're calling them. Even if other people can sign up for them, will anybody who's not an Xtra customer do so? I would suggest that the answer is almost certainly "no" - at best, a few people might sign up out of morbid curiosity. This means that you're essentially restricted to networking with other Xtra users - whereas if you use any of the other social networking sites out there, you can network with anybody with access to the Internet.

I'll admit that their old webmail system was old and was begging to be upgraded or replaced; but this is not the way they should have done it. What they've done is alienate a lot of users, confuse many more and just brass off the rest. That's just those of their customers who actually use their Xtra mail, of course - the rest of their customers won't even care in the slightest.

I think I heard that Xtra were saying that "Bubble" was going to provide "an exciting range of new services that will change the way you use the internet" - I'd say that the only way it has changed the way that some people use the Internet is which provider they use it through.